With more developers finding vibe coding results are substandard, more people are looking to cognitive architecture for solutions.

The $50 Billion Question Nobody's Asking

Corporate America has a problem. After investing billions in AI infrastructure, deploying large language models across every department from legal to customer service, the results are underwhelming. While consultants project long-term gains—McKinsey estimates $4.4 trillion in potential value, ARK Invest forecasts 140% productivity boosts—actual enterprise deployments tell a different story. Recent Federal Reserve research found that workers using generative AI saved only 5.4% of their work hours, translating to just 1.4% productivity gain across the entire workforce. Even optimistic reports from financial services show average gains of only 20%. The culprit? A fundamental misunderstanding of what makes AI capable of sustained, intelligent work.

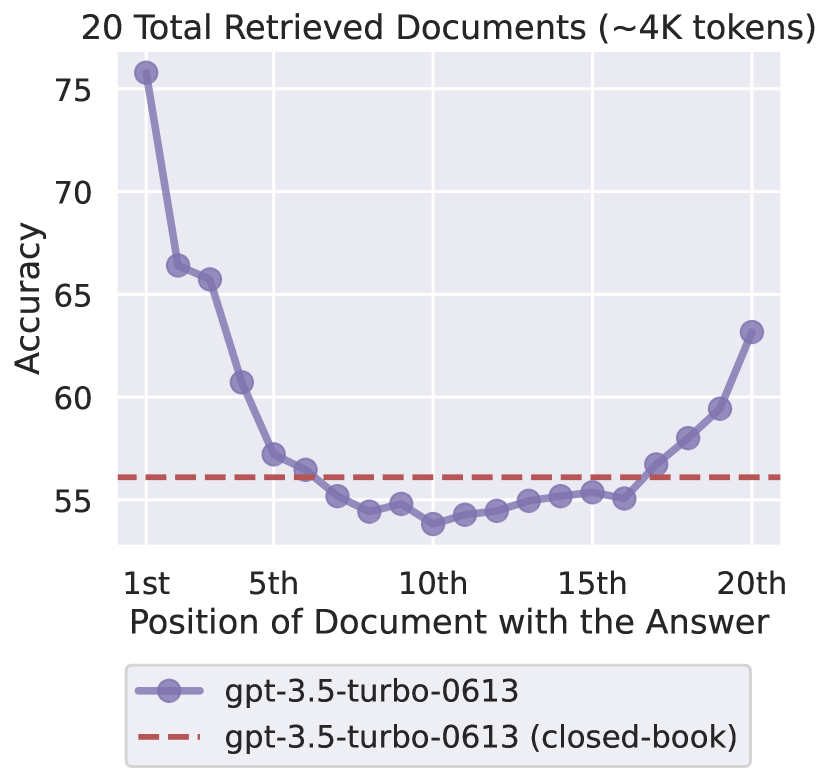

The numbers tell a stark story. Despite context windows expanding from 4,000 tokens to over 2 million in just two years—a 500x increase—research shows that effective information retrieval actually degrades well before these theoretical limits. Stanford's "Lost in the Middle" research by Nelson F. Liu et al. demonstrated that LLMs exhibit a U-shaped attention curve, with performance dropping by up to 50% when critical information sits in the middle portions of long contexts. Red Hat's benchmarking of the Granite-3.1-8B model found that its effective context length—where it maintains reliable performance—is approximately 32,000 tokens, with significant degradation beyond that threshold despite supporting a 128K theoretical window.

The industry's response? Make the windows even bigger. But this misses the real challenge that even OpenAI's leadership acknowledges.

"We're not there yet on true long-horizon tasks," Sam Altman has acknowledged, noting that current AI is "like an intern that can work for a couple of hours" while the goal is systems that can work autonomously "for a couple of days" or more. He predicts 2025 will see "the first AI agents join the workforce"—implicitly confirming that today's systems lack the sustained cognitive capabilities needed for extended, autonomous work.

The Architecture of Forgetting: Why Scale Fails

To understand why massive context windows fail as a solution for complex, extended tasks, consider the fundamental architecture of transformer-based LLMs. These models use attention mechanisms to process information—essentially determining which parts of the input to focus on when generating responses. But attention doesn't scale linearly.

When a text sequence doubles in length, an LLM requires four times as much memory and compute to process it. This quadratic scaling isn't just a computational inconvenience—it's a fundamental barrier to the kind of sustained intelligence needed for long-horizon tasks.

Stanford's "Lost in the Middle" research quantified what practitioners already suspected: LLMs exhibit a U-shaped attention curve, where information in the middle of long contexts becomes essentially invisible. Performance drops by up to 50% when critical information sits between the 30th and 70th percentile of the context window.

Even more problematic is the distraction effect. OpenAI's own documentation for their latest reasoning models acknowledges this limitation, recommending users include "only the most relevant information to prevent the model from overcomplicating its response."

The implication is clear: throwing more tokens at the problem doesn't create the persistent, goal-oriented intelligence needed for long-horizon tasks—it creates noise.

The Long-Horizon Challenge

Long-horizon tasks—projects that unfold over days, weeks, or months—represent the holy grail of AI productivity. These are the complex endeavors that define knowledge work: conducting comprehensive research, developing software systems, managing multi-phase projects, or creating detailed strategic analyses.

Current LLMs fail at these tasks not because they lack intelligence, but because they lack the cognitive infrastructure to support sustained effort. Consider what a human brings to a long-term project:

- Goal persistence: Maintaining objectives across multiple work sessions

- Progressive refinement: Building on previous work rather than starting fresh

- Adaptive planning: Adjusting strategies based on intermediate results

- Selective attention: Focusing on relevant information while ignoring distractions

- Knowledge accumulation: Learning from the project itself as it progresses

None of these capabilities emerge from simply having a larger context window. They require architectural innovations that mirror the cognitive systems that enable human intelligence.

The Cognitive Architecture Revolution

While Silicon Valley giants competed on context size, a parallel development emerged from cognitive science research: what if, instead of giving LLMs bigger notebooks, we gave them actual memory systems designed for long-horizon work?

This shift represents more than an optimization—it's a fundamental reconceptualization of how AI systems should operate. Instead of a monolithic context window, cognitive architectures implement multiple specialized memory systems that work together to enable sustained, intelligent effort:

Working Memory maintains immediate task context—typically just 5-10 messages—with ultra-high attention weights. This mirrors the human cognitive limit of 7±2 items in working memory, ensuring the system maintains focus on current objectives without distraction.

Episodic Memory stores specific interaction sequences, compressed and indexed for similarity-based retrieval. Unlike flat context, these memories can be selectively accessed based on relevance to current goals, allowing the system to reference past work without processing thousands of irrelevant tokens.

Semantic Memory builds persistent knowledge structures, creating conceptual maps that link related information across time and sessions. This enables the kind of knowledge accumulation essential for long-horizon tasks, where understanding deepens over time.

Procedural Memory encodes learned patterns and workflows, allowing the system to recognize and adapt successful strategies. This is crucial for multi-phase projects where similar sub-tasks recur with variations.

The real innovation lies in the Memory Controller—a meta-cognitive layer that manages information flow between these systems, deciding what to store, what to retrieve, and crucially, what to forget. This selective management is what enables sustained focus on long-term objectives.

The Compression Imperative: Intelligence Through Information Density

The most counterintuitive finding in modern AI research is that intelligence for long-horizon tasks correlates not with how much information a system can access, but with how efficiently it can compress and organize that information.

Consider a typical long-horizon scenario: a multi-week research project. A traditional LLM approach would attempt to maintain all research notes, sources, analyses, and iterations in its context window—quickly overwhelming even a 2-million-token capacity. But most of this information is redundant, outdated, or irrelevant to the current phase of work.

Cognitive architectures take a different approach through intelligent compression. A two-hour strategy meeting that generates 20,000 tokens of transcript gets compressed to perhaps 200 semantic tokens representing key decisions, action items, and contextual relationships—a 100:1 compression ratio with no loss of actionable information.

This compression isn't just about efficiency; it's about enabling the kind of abstract thinking necessary for complex tasks. By distilling information to its semantic essence, the system can identify patterns, make connections, and build insights that would be impossible when drowning in raw data.

The Hierarchy Hypothesis: Why Structure Beats Scale for Long-Horizon Work

The emerging consensus among AI researchers is that long-horizon intelligence requires hierarchical organization, not just raw capacity. This "Hierarchy Hypothesis" suggests that the ability to work on extended tasks emerges from the interaction between specialized memory systems operating at different levels of abstraction.

Consider how humans approach a long-term project like writing a book:

- Strategic level: Overall narrative arc and thematic goals

- Tactical level: Chapter outlines and character development

- Operational level: Scene-by-scene execution

- Technical level: Sentence construction and word choice

Each level maintains its own goals, constraints, and memory requirements. A flat context window treats all these levels equally, creating confusion and inefficiency. Cognitive architectures maintain separation and hierarchy, allowing the system to operate at the appropriate level of abstraction for each phase of work.

The Forgotten Innovation: Strategic Forgetting

Perhaps the most radical requirement for long-horizon tasks is the ability to forget strategically. While context windows preserve everything until they overflow, effective long-term work requires actively pruning irrelevant information.

Human experts don't remember every detail of every project—they abstract principles, patterns, and key insights while letting specifics fade. This "forgetting" is what allows them to maintain focus on current objectives without being overwhelmed by historical details.

Cognitive architectures implement controlled forgetting at multiple levels:

- Token-level: Removing filler words and redundancies

- Message-level: Consolidating exchanges into summary representations

- Session-level: Abstracting conversations into outcomes and decisions

- Pattern-level: Extracting recurring themes while discarding instances

This selective retention is essential for long-horizon tasks where the volume of generated information would otherwise overwhelm any system's ability to maintain focus.

The Implementation Imperative: Building for the Long Horizon

The shift from context-window thinking to cognitive architecture isn't just an academic exercise—it's becoming essential for organizations that want AI to tackle meaningful, complex work.

The requirements for long-horizon AI capability are clear:

- Persistent goal management that survives across sessions

- Progressive knowledge building that accumulates insights over time

- Adaptive strategy selection based on intermediate results

- Selective attention mechanisms that maintain focus despite growing information

- Hierarchical organization that separates strategic from tactical concerns

These aren't features that can be added to existing LLMs through prompt engineering or fine-tuning. They require fundamental architectural changes—the kind of changes that cognitive architectures provide.

The Economic Reality of Long-Horizon AI

The financial implications of enabling true long-horizon AI work are transformative. Current approaches that attempt to maintain entire project contexts in active memory can cost thousands of dollars per task in token fees alone. A single query using Claude's 200,000-token context window costs approximately $30 at current pricing. A month-long research project keeping all information in context could easily exceed $50,000 in API costs—and that's before considering the latency issues that make such an approach impractical anyway.

Cognitive architectures flip this equation. By maintaining compressed representations and retrieving only relevant information for each work session, the same project might cost a few hundred dollars—making it economically viable to deploy AI for the complex, extended tasks that actually move the needle on productivity. More importantly, by avoiding the latency penalties of massive context processing, these systems can maintain the responsiveness needed for interactive work.

The Path Forward: From Context to Cognition

As Sam Altman and other AI leaders acknowledge, the path to AI systems capable of true long-horizon work isn't through larger context windows—it's through better cognitive architecture. The evidence is mounting:

- Research consistently shows performance degradation in large contexts

- Compression and hierarchical organization outperform raw capacity

- Human cognition provides a proven template for long-horizon intelligence

- Early implementations of cognitive architectures show dramatic improvements

The question isn't whether to adopt cognitive architectures for long-horizon tasks, but how quickly organizations can make the transition. Those that continue to chase context window expansion will find themselves limited to simple, reactive applications. Those that embrace cognitive architecture will unlock AI's potential for the complex, sustained work that defines real value creation.

Conclusion: The End of the Context Window Era

The context window wars are ending not with a victor, but with the recognition that the entire competition was misguided. While tech giants competed to build bigger buckets, the real challenge was building intelligent systems capable of sustained, goal-oriented work over extended periods.

For enterprises seeking transformative AI capabilities, the message is clear: stop optimizing for context size and start building for cognitive sophistication. The promise of AI that can tackle long-horizon tasks—conducting month-long research projects, developing complex systems, managing multi-phase initiatives—isn't about having perfect recall of every token. It's about having the cognitive architecture to maintain goals, build knowledge progressively, and adapt strategies over time.

The future belongs not to the LLMs with the longest memory, but to those with the smartest architecture. And for long-horizon tasks, smart means structured, hierarchical, and selective—exactly what cognitive architectures provide and context windows, no matter how large, cannot.